The Tip Screen Is Not a Neutral Suggestion — Here's What It's Actually Doing to Your Wallet

The Tip Screen Is Not a Neutral Suggestion — Here's What It's Actually Doing to Your Wallet

You've been there. The card reader swings around, the server steps back, and a screen presents you with three glowing options: 20%, 25%, 30%. Maybe there's a fourth button that says "Custom" — the one that feels vaguely rude to press. You tap 20%, pocket your card, and move on. You did the right thing. You tipped fairly.

Except the math on that screen was probably calculated on your post-tax total. And for most of American tipping history, that's not how it was supposed to work.

Where Tipping Actually Came From

Tipping in the United States has a complicated history that most people have never been told. The practice arrived from Europe in the late 1800s, initially among wealthy Americans who'd traveled abroad and brought the custom home as a kind of status signal. It wasn't universally welcomed. In fact, there was a genuine anti-tipping movement in the early 1900s — several states actually tried to ban the practice outright, arguing that it created an uncomfortable class dynamic between customers and workers.

Those bans didn't stick. And when Prohibition collapsed the restaurant industry's profit margins in the 1920s, tipping became something employers leaned into as a way to offset what they paid workers. The federal minimum wage system eventually formalized this with a separate, lower "tipped minimum wage" — a structure that still exists today. In most states, tipped workers can legally be paid as little as $2.13 an hour by their employer, with the expectation that customer tips will bring them up to the regular minimum wage or beyond.

That context matters, because it explains what tipping was designed to compensate for. It was never meant to be a bonus on top of a living wage. For millions of servers, bartenders, and delivery workers, it is the wage.

The Pre-Tax Standard Nobody Talks About

For most of the 20th century, standard etiquette guides were clear: calculate your tip on the pre-tax subtotal. The logic was straightforward — your server didn't cook the food, set the prices, or determine your state's sales tax rate. The tip was meant to reflect the quality of service on the meal itself, not on a government levy added at the end.

Etiquette columnists repeated this for decades. The pre-tax subtotal was the baseline. A 15% tip was considered standard through much of the late 20th century, with 20% representing genuinely excellent service.

So how did we get here — to a world where 20% on the post-tax total is the assumed floor, and 25% or 30% are presented as equally reasonable defaults?

The Point-of-Sale Pivot

The shift didn't happen because of a cultural conversation or a change in etiquette guidance. It happened because of software.

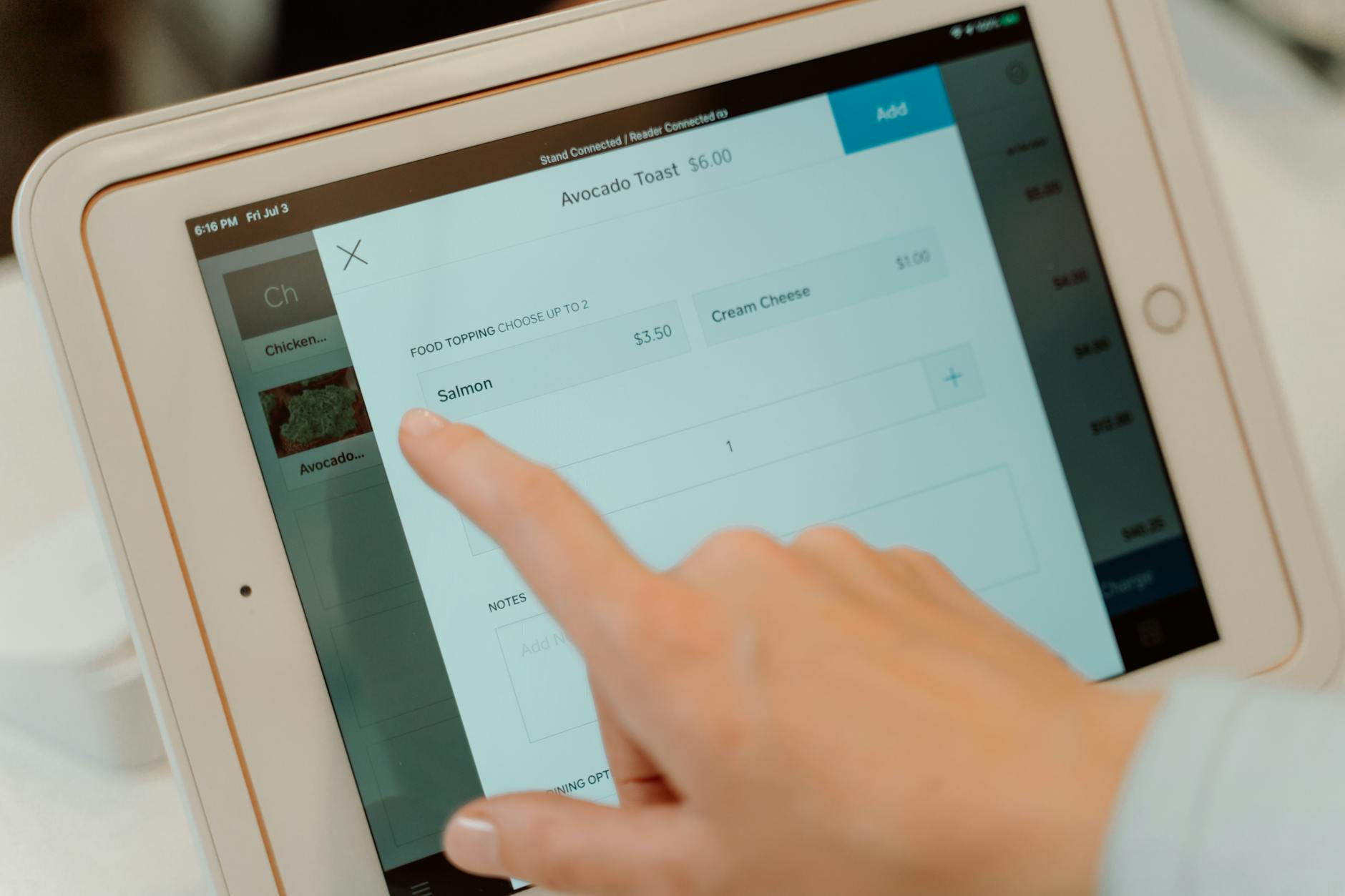

As restaurants and cafes replaced paper receipts and cash registers with tablet-based point-of-sale systems in the 2010s, those systems needed to be programmed with default tip suggestions. The companies building that software — and often the restaurant owners configuring it — had a financial incentive to set those defaults as high as felt socially acceptable. Calculating suggested tips on the post-tax total rather than the pre-tax subtotal was a small tweak that most customers would never notice, but it meaningfully increased average tip amounts across millions of transactions.

Nobody announced this change. There was no memo. The screen just started showing you numbers, and because the numbers came from a machine rather than a person, they felt authoritative. Objective. Like they were simply telling you what tipping means now.

They weren't. They were a default setting.

How Much Does It Actually Matter?

On a modest dinner bill, the difference between tipping on pre-tax versus post-tax is a matter of cents. On a $40 meal in a state with 8% sales tax, the pre-tax subtotal is about $37. A 20% tip on $37 is $7.40. A 20% tip on the full $43.20 is $8.64. Not a dramatic difference.

But scale that across every restaurant meal, every coffee shop visit, every delivery order — and across a population of hundreds of millions of people doing this multiple times a week — and the aggregate shift is significant. For individual workers in high-volume restaurants, the difference in their take-home pay from post-tax versus pre-tax tipping adds up over the course of a year.

So What Should You Actually Do?

This isn't an argument for tipping less. Restaurant workers in most of the country depend on tips in a way that reflects a deeply embedded structural reality, and that reality doesn't change based on which line of the receipt you're calculating from.

What this is an argument for is understanding the system you're participating in. The tip screen isn't a neutral informational tool. It's a designed interface with built-in assumptions — and those assumptions have shifted over time in ways that weren't explained to you.

If you want to tip on the pre-tax subtotal, that's historically accurate and still entirely reasonable. If you tip on the post-tax total because it's easier and you want to be generous, that's also fine. The point is that you're making a choice — not just following a rule that was handed down from some authoritative source.

The screen makes it feel like there's one right answer. There isn't. There's just math, a worker who probably needs the money, and a piece of software that was programmed by someone with an interest in the outcome.

The takeaway: Tipping 20% on the pre-tax subtotal was the standard for most of American tipping history. The shift to post-tax calculation happened quietly through the design of digital payment systems, not through any change in etiquette norms. Understanding that doesn't mean you should tip less — it means you should tip with your eyes open.